Even well-funded startups with strong teams can fail if product-market fit is not rigorously validated early. A significant number of companies still prioritize rapid development and scaling over confirming real user demand.

According to CB Insights, about two-thirds of product-market fit (PMF) failures come from early-stage companies that never manage to find a real market for their product. However, it is not only a seed-stage problem. Around 20 Series B and later companies also point to weak PMF as a key reason things did not work out.

Another data point from the Harvard Law School Forum on Corporate Governance suggests that around 75% of venture-backed startups fail to reach a positive exit outcome (IPO or profitable acquisition), often because they scale prematurely or build without sufficient user validation.

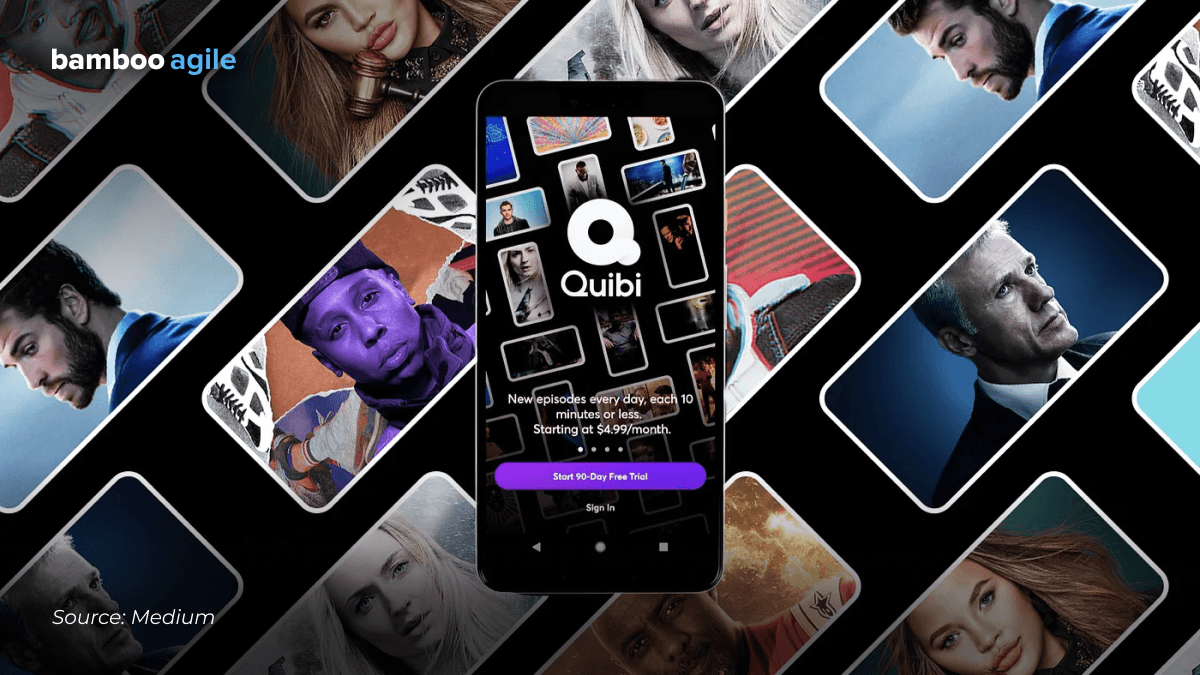

Such failures are really often, and one example is Quibi. Backed by nearly $1.75 billion and led by experienced executives, it launched with a fully polished product but without properly validating how users actually wanted to consume short-form video.

Quibi never ran an MVP (minimum viable product) or any experimental beta to try to test what kind of content and what kind of features resonated well with their target users. They never even did focus groups. As a result, within six months, it shut down.

‘Any startup that feels as though they don’t need to launch an MVP or beta is fooling themselves. Mistakes will be made and money will be wasted. You just can’t take shortcuts here.’ cites Jaryd Hermann, the creator of the newsletter How They Grow, which covers startup success and failure patterns.

It is often at this stage that external perspective is valuable. MVP consultants can define what actually needs to be validated and strip away assumptions that do not hold up under real user feedback. But the difference between a mediocre consultant and a strong one is stark – and learning how to tell them apart is what ultimately protects your product.

Who is an MVP development consultant?

An MVP development consultant is a professional who helps startups or businesses build a Minimum Viable Product – the simplest version of a product with core features to test the market quickly.

They usually help with:

- Defining essential features

- Product strategy & validation

- UX/UI planning

- Choosing the right tech stack

- Managing developers/designers

- Launching fast and collecting feedback.

In short, they help turn your idea into a testable product with less risk and lower cost.

Should you hire an independent MVP development consultant or an MVP product development agency?

Both options can look similar, but they operate quite differently.

An experienced independent consultant (e.g., it can be a senior product leader with a track record) often brings sharp focus. They have likely led multiple product cycles end-to-end and can quickly identify what matters and what does not. This can be particularly effective in early-stage discovery, where clarity is more valuable than velocity.

However, independence has its limits. One person can only go so far when your MVP requires coordinated effort across product strategy, UX, engineering, and possibly even go-to-market alignment.

Agencies, on the other hand, are built for that coordination. With a good MVP development consulting firm you are effectively buying a system, which includes cross-functional expertise, established workflows, and the ability to move from idea to tested product without stitching together multiple freelancers.

That said, MVP development agencies vary widely. Some lean heavily into the process but lack strategic depth. Others excel at delivery but do not challenge assumptions enough.

So here is how to compare these two minimum viable product development consultancy options:

| Criteria | Independent consultant | MVP development agency |

|---|---|---|

| Expertise depth | High, often specialized | Broad, across multiple domains |

| Execution capacity | Limited to individual bandwidth | Scalable team with defined roles |

| Speed of delivery | Fast in decision-making, slower in execution | Faster end-to-end due to team setup |

| Strategic input | Strong, experience-driven | Varies by agency maturity |

| Flexibility | High, adaptable engagement | Structured, but sometimes rigid |

| Risk distribution | Relies heavily on one person | Shared across team and processes |

5 things that separate a genuine MVP product development consultant from an order-taker

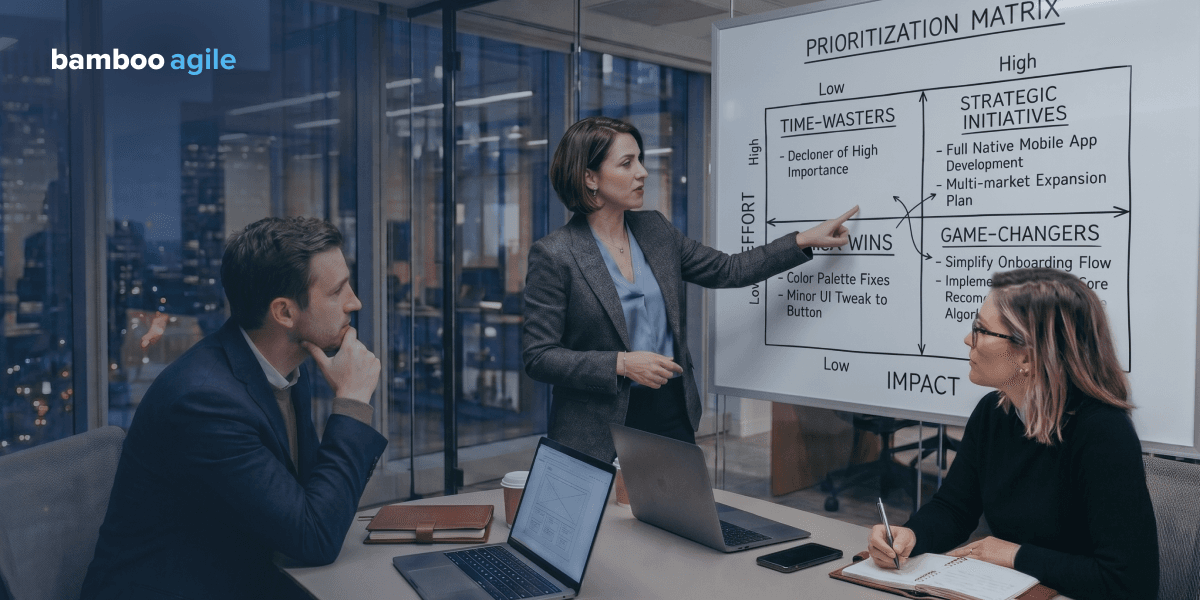

1. They scope against the assumptions you need to validate, not what you want to build

A strong consultant will often ignore your feature list at the start. Instead, they will try to isolate what actually needs to be proven for the idea to work. That might lead to a much smaller and sometimes completely different MVP than what you had in mind.

There is an example of Dropbox. Before building the product, Drew Houston created a short demo video explaining how it would work. It was done to test whether people understood the value and cared enough to sign up. As a result, the video alone drove tens of thousands of signups, therefore, the demand was validated without building the system itself.

So instead of building a full marketplace, a consultant might suggest manually matching a few users behind the scenes just to see if the core interaction works. It can feel like cutting corners, but it is really about isolating the one assumption that matters most and testing it as directly as possible.

2. They have relevant domain expertise

Inexperienced consultants often default to generic MVP advice. They move fast, simplify, and test quickly. On the contrary, someone with real expertise in the subject area will immediately introduce constraints that change how validation can even be approached. They can be legal, operational, or other industry-specific ones.

In healthcare, even a simple validation test can be limited by data protection rules, clinical responsibility, and approval processes. In automotive or mobility, it is often slowed down by safety requirements and physical-world dependencies. Infrastructure and integration are the main constraints in energy or utilities. You cannot simply test a feature in isolation because systems are interconnected, legacy-heavy, and often regulated.

For an MVP development consultant, domain expertise is understanding what cannot be assumed away in a given industry and adjusting what MVP even means before any building starts.

3. They push back on your scope

A real consultant will regularly question whether something needs to be built at all. The founder of Airbnb, Brian Chesky, shared during his Blitzscaling talk at Stanford University that he and the team started with manually onboarded hosts, took photos themselves, and handled bookings directly. First things first, they needed to confirm that people were willing to rent out space and that others would pay for it.

A genuine consultant will most probably interrupt you, especially when something does not clearly contribute to learning. Example of how that sounds in practice:

- Why do we need this feature for the first version?

- What would we lose if we did not build this at all?

- Can we test this manually first?

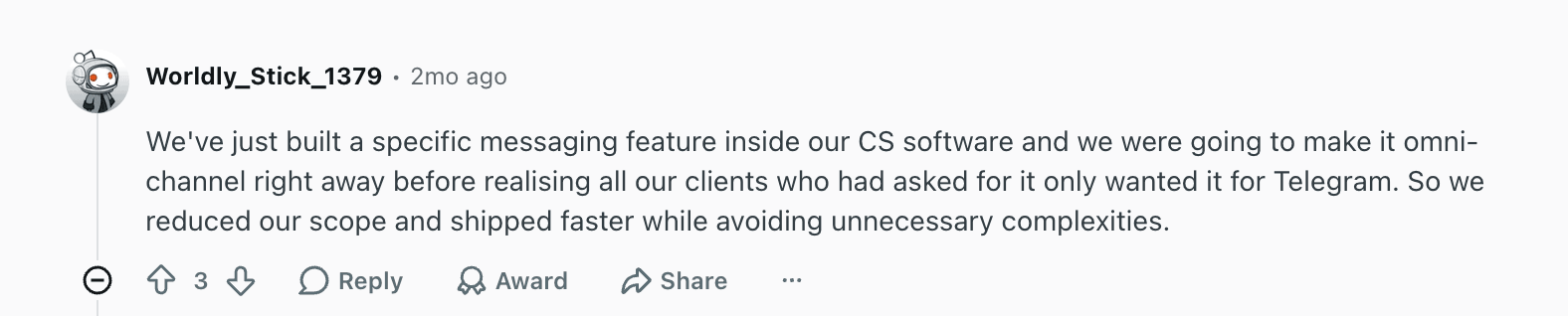

Reddit users also often note the importance of this point:

4. They have a defined process for handling scope changes

Scope always changes during MVP work. The difference is whether those changes are reactive or structured.

Instagram started as a much broader product called Burbn. It included check-ins, plans, and multiple features. When Kevin Systrom, one of the co-founders, looked at usage data, it became clear that people mainly used the photo-sharing part. Eventually, the team removed everything else and refocused the product.

As we see, that decision was not random but was based on observing behavior and adjusting scope accordingly. A good consultant builds this into the process from the start: define what success looks like, run a test, and then decide what to keep, change, or remove based on evidence. Otherwise, scope changes tend to come from opinions or stakeholder pressure.

5. They can show post-launch outcomes

This usually becomes clear when you ask a very simple question: ‘So what changed after launch?’ If the answer circles back to features, timelines, or how smoothly the build went, you are likely talking to someone focused on delivery. As Eric Ries, the creator of the Lean Startup methodology, put it, ‘Success is not delivering a feature; success is learning how to solve the customer’s problem.’

That mindset changes what ‘proof’ looks like. Instead of showcasing screens or functionality, MVP development experts will point to outcomes like whether users converted, whether behavior matched expectations, or whether the team had to rethink the core idea entirely.

There’s also usually a level of specificity. Not ‘it went well’, but something closer to: what metric moved, what did not, and what decision followed. The absence of that kind of clarity is often a sign that no real validation happened but only execution.

What questions should I ask an MVP consultant before hiring?

Hiring an MVP development consultant is mostly about understanding how they think under uncertainty. The same brief can produce either a validation-focused experiment or a feature-heavy build. It depends on how they approach it. The questions in the table below are designed to surface that difference.

| Question | Good answer | Poor answer |

|---|---|---|

| 1. What do you think we need to learn from this MVP, and how would you scope it to learn that? | Starts from assumptions and hypotheses, reduces scope to isolate one or two critical risks. | Starts from features, timelines, or ‘version 1 of the product’. |

| 2. Can you show me an MVP you have delivered in a similar domain, and what happened after launch? | Talks about outcomes: what was validated, what changed, what was killed or iterated. | Focuses only on what was built, with no clear post-launch signal. |

| 3. How do you handle scope changes mid-build, and can you walk me through a specific example? | Describes a structured process tied to learning (metrics, experiments, re-scoping based on evidence). | Describes being flexible without a clear decision framework. |

| 4. What would you cut from our brief right now, and why? | Immediately removes non-essential parts and explains what does not help validate the core idea. | Avoids cutting anything or tries to preserve everything . |

| 5. How do you decide when an MVP is ready to launch vs when it needs another sprint? | Defines readiness in terms of validated learning or signal thresholds. | Defines readiness as ‘feature complete’ or ‘polished enough’. |

| 6. What is the riskiest assumption in this idea, and how would you test it first? | Identifies a specific assumption and proposes a minimal experiment to isolate it. | Gives a general answer like ‘we will test everything step by step’. |

| 7. What would make you advise us to stop this MVP completely? | Mentions clear failure signals (no user interest, no willingness to pay, invalid assumptions). | Struggles to define stopping criteria or always pushes toward continuation. |

The pattern is usually visible across multiple answers. Strong consultants naturally talk in terms of risk, learning, and decisions. Weaker ones default to delivery language. If most answers feel like project planning rather than validation design, you are probably not speaking to someone who will challenge assumptions in a meaningful way.

Red flags to walk away from

The patterns below indicate that you are probably speaking to a delivery-focused vendor rather than an MVP product development consultant who is actually trying to reduce product risk.

- They cannot name a specific assumption your MVP needs to validate

This is one of the most consistent failure signals. If there is no clear hypothesis, there is nothing to test. - They have no examples of clients who iterated after launch

It aligns with the findings of A Systematic Mapping Study and Practitioner Insights on the Use of Software Engineering Practices to Develop MVPs, which suggest that MVPs are meant to function as iterative experiments, where learning continues after launch rather than ending at it. - They do not plan a discovery phase

This will lead to months of building followed by ‘nobody asked for this’. - They promise a fixed delivery date before the scope is agreed upon

Such fixed deadlines and a countdown timer force scope inflation and rushed decisions. - They never define failure conditions

If there is no agreed point where the MVP is considered not working, teams keep building even when signals are negative.

MVP development consulting and other related services by Bamboo Agile

At Bamboo Agile, MVP work is usually delivered as a combination of strategy, product thinking, and engineering execution, depending on the stage of the idea and how defined the assumptions are.

With over 20 years of experience in software development, our team has delivered MVPs across a wide range of industries, including highly regulated domains such as finance, healthcare, education, and enterprise systems. We have collaborated with clients globally, with a strong presence in the US, UK, Switzerland, Austria, and across Europe, which gives us practical exposure to different market constraints, compliance environments, and product expectations.

Our MVP development services include:

- CTO as a Service. This one is for teams that do not yet have strong technical leadership and need help from a fractional CTO to make architectural and engineering decisions early.

- Discovery phase. We deliver structured work to define assumptions, validate the problem space, and optimize the scope before development starts.

- Prototyping. We can create lightweight interactive models used to test flows and concepts before engineering investment.

- Proof of Concept (PoC) development. This is a technical validation of whether something is feasible or integrates as expected.

- MVP development. Our team can handle building the smallest usable version of a product that can be tested with real users and generate feedback loops.

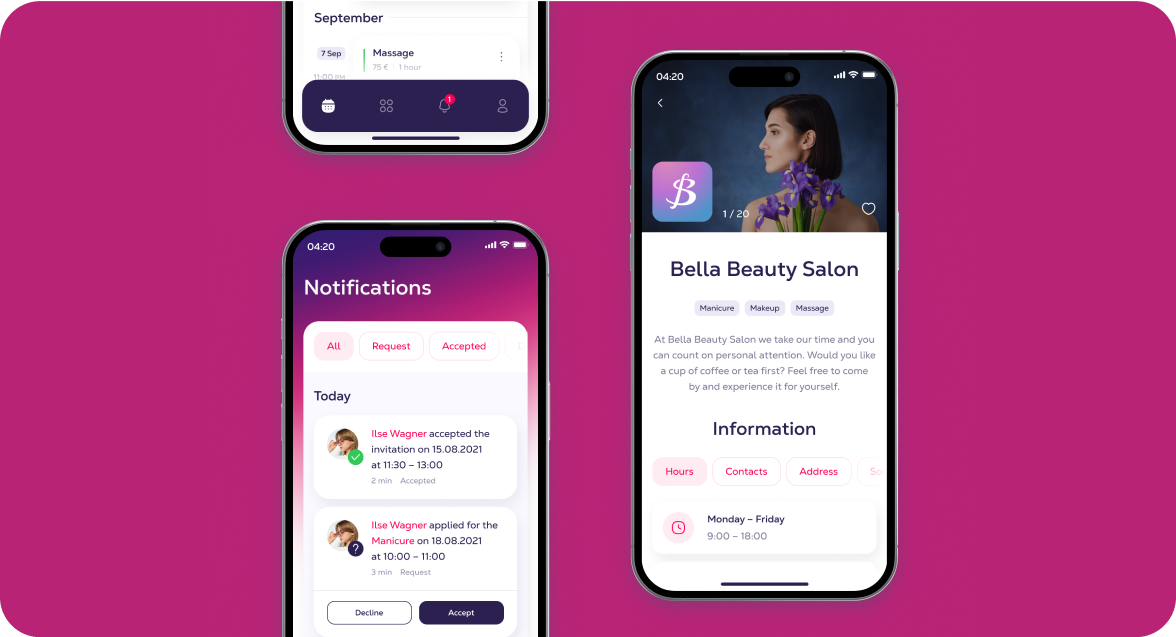

Salon Booking App

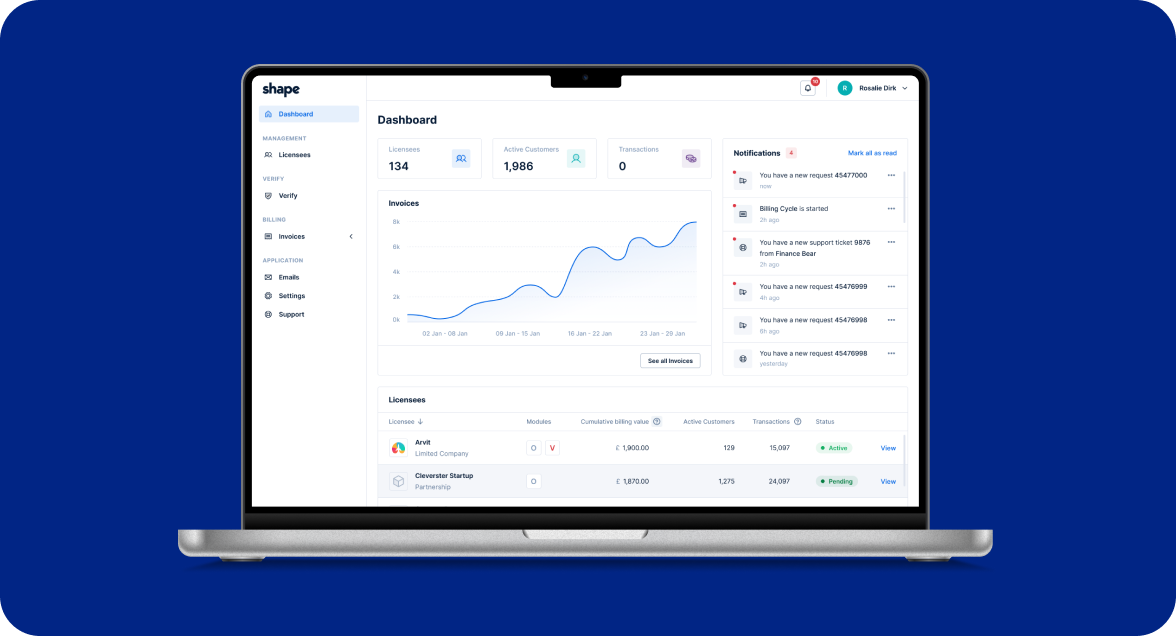

Shape

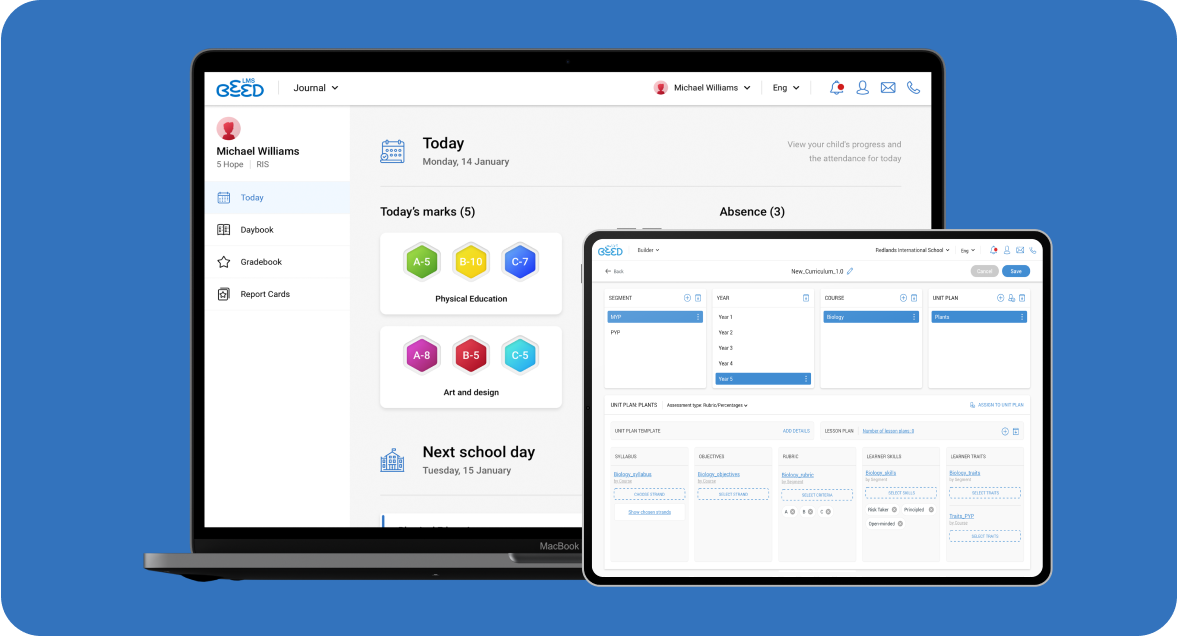

School OS

Rankmapp

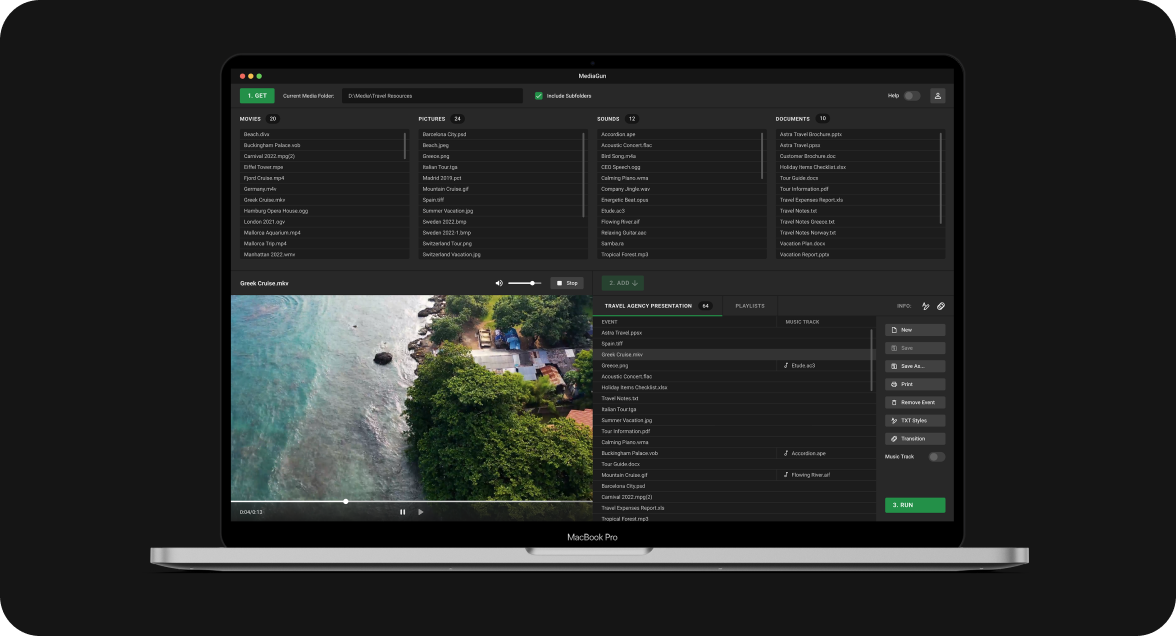

MediaGun

BeED LMS

Conclusion

We have looked at what differentiates a strong MVP consultant from an order-taker, what questions to ask, and what red flags to watch for. Based on that, below are the key takeaways to keep in mind when making a decision:

- A real MVP product development consultant will define what needs to be validated, not just what needs to be built

- If they do not challenge your scope early, they probably will not protect you from overbuilding later

- Strong consultants speak in terms of assumptions, risks, and learning

- Always ask about post-launch outcomes

- Domain expertise is essential in complex industries – it defines what MVP even means

- If there is no discovery phase, you are likely skipping the most important part

- A good consultant will tell you what not to build and explain why

- No clear failure criteria = no real validation process

- If everything sounds easy, fixed, and predictable, then it is probably not MVP development consulting.

The hiring conversation itself is usually enough to tell the difference. The right consultant will reduce scope, introduce constraints, and focus your attention on what actually needs to be proven.

If you want a second opinion on your MVP scope or approach, reach out to Bamboo Agile. We can help you define what to test, how to test it, and what not to build yet.